Amores (Ovid)

Amores is Ovid's first completed book of poetry, written in elegiac couplets. It was first published in 16 BC in five books, but Ovid, by his own account, later edited it down into the three-book edition that survives today. The book follows the popular model of the erotic elegy, as made famous by figures such as Tibullus or Propertius, but is often subversive and humorous with these tropes, exaggerating common motifs and devices to the point of absurdity.

While several literary scholars have called the Amores a major contribution to Latin love elegy,[1][2] they are not generally considered among Ovid's finest works[3] and "are most often dealt with summarily in a prologue to a fuller discussion of one of the other works".[4]

History[edit]

Ovid was born in 43 BCE, the last year of the Roman Republic, and he grew up in Sulmo, a small town in the mountainous Abruzzo.[5] Based on the memoirs of Seneca the Elder, scholars know that Ovid attended school in his youth.[6] During the Augustan Era, boys attended schools that focused on rhetoric in order to prepare them for careers in politics and law.[7] There was a great emphasis placed on the ability to speak well and deliver compelling speeches in Roman society.[7] The rhetoric used in Amores reflects Ovid’s upbringing in this education system.[6]

Later, Ovid adopted the city of Rome as his home, and began celebrating the city and its people in a series of works, including Amores.[5] Ovid’s work follows three other prominent elegists of the Augustan Era, notably Gallus, Tibullus, and Propertius.[5]

Under Augustus, Rome underwent a period of transformation. Augustus was able to end a series of civil wars by concentrating the power of the government into his own hands.[8] Though Augustus held most of the power, he cloaked the transformation as a restoration of traditional values like loyalty and kept traditional institutions like the Senate in place, claiming that his Rome was the way it always should have been.[9] Under his rule, citizens were faithful to Augustus and the royal family, viewing them as “the embodiment of the Roman state.”[8] This notion arose in part through Augustus’ attempts to improve the lives of the common people by increasing access to sanitation, food, and entertainment.[10] The arts, especially literature and sculpture, took on the role of helping to communicate and bolster this positive image of Augustus and his rule.[11] It is in this historical context that Amores was written and takes place.

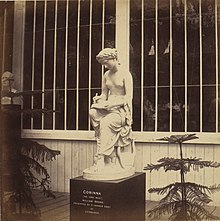

Speculations as to Corinna's real identity are many, if indeed she lived at all. It has been argued that she is a poetic construct copying the puella-archetype from other works in the love elegy genre. The name Corinna may have been a typically Ovidian pun based on the Greek word for "maiden", "kore". According to Knox there is no clear woman that Corinna alludes to, many scholars have come to conclude that the affair detailed throughout Amores is not based on real-life, and rather reflects Ovid’s purpose to play with genre of the love elegy rather than to record real, passionate feelings for a woman.[12]

Summary[edit]

The Amores is a poetic first person account of the poetic persona's love affair with an unattainable higher class girl, Corinna. It is not always clear if the author is writing about Corinna or a generic puella.[13] Ovid does not assume a single woman as a subject of a chronical obsession of the persona of lover. The plot is linear, with a few artistic digressions such as an elegy on the death of Tibullus.

Book 1[edit]

- 1.1 - The poet announces that love will be his theme.

- 1.2 - He admits defeat to Cupid.

- 1.3 - He addresses his lover for the first time and lists her good qualities,

- 1.4 - He attends a dinner party; the poem is mostly a list of secret instructions to his lover, who is also attending the party along with her husband.

- 1.5 - He describes a visit Corinna, here named for the first time, makes to his rooms.

- 1.6 - He begs the doorkeeper to let him into the house to see his love.

- 1.7 - He hits his lover and is remorseful.

- 1.8 - Mostly a monologue from Dipsas, a tipsy procurer (pimp), to a young lady about how to deceive rich men. This is the longest poem in the book.

- 1.9 - The poet compares lovers with soldiers: every lover is a warrior, and Cupìd has his camp.

- 1.10 - He complains that his mistress is demanding material gifts, instead of the gift of poetry.

- 1.11 - He asks Corinna's maid to take a message to her.

- 1.12 - The poet responds angrily when Corinna cannot visit.

- 1.13 - He addresses the dawn and asks it to wait, so he can stay longer with his mistress.

- 1.14 - He mocks Corinna for ruining her hair by dyeing it.

- 1.15 - The book ends with Ovid writing of the famous poets of the past, and claiming his name will be among them.

The book has a ring arrangement, with the first and last poems concerning poetry itself, and 1.2 and 1.9 both contain developed military metaphors.

Book 2[edit]

- 2.1: The poet describes the sort of audience that he desires.

- 2.2: The poet asks Bagoas, a woman's servant, to help him gain access to his mistress.

- 2.3: The poet addresses a eunuch (probably Bagoas from 2.2) who is preventing him from seeing a woman.

- 2.4: The poet describes his love for women of all sorts.

- 2.5: The poet addresses his lover, whom he has seen being unfaithful at a dinner party.

- 2.6: The poet mourns the death of Corinna's parrot.

- 2.7: The poet defends himself to his mistress, who is accusing him of sleeping with her handmaiden Cypassis.

- 2.8: The poet addresses Cypassis, asking her to keep their affair a secret from her mistress.

- 2.9a: The poet rebukes Cupid for causing him so much pain in love.

- 2.9b: The poet professes his addiction to love.

- 2.10: The poet relates how he loves two girls at once, in spite of Graecinus' assertion that it was impossible.

- 2.13: The poet prays to the gods about Corinna's abortion.

- 2.14: The poet condemns the act of abortion.

Book 3[edit]

- 3.1 - Ovid imagines being confronted by the personified 'Elegy' and 'Tragedy', who start to argue since Tragedy believes Ovid never stops writing elegies. Elegy responds by arguing her case, responding sarcastically to Tragedy that she should "take a lighter tone" and points out she is even using her (elegiac) metre to lament. Elegy seems to be victorious.

- 3.2 - Ovid woos a girl at the races, despite perhaps only just having met her. Ovid seems to be very desperate - as shown by quickly making his move. The reader is left unsure whether his advances were truly accepted or whether Ovid (chooses to?) misinterpret the girl's rejections.

- 3.3 - Ovid laments that his lover has not been punished for lying. He blames the gods for allowing beautiful women "excessive liberties" and for punishing and fighting against men. However, he then states that men need to "show more spirit" and if he was a god, he would do the same as them, with respect to beautiful women.

- 3.4 - Ovid warns a man about trying to guard his lover from adultery. He justifies this through a commentary on forbidden love and its allure.

- 3.5 - Ovid has a dream about a white cow that has a black mark. The dream symbolises an adulterous woman who now has been forever stained by the mark of adultery.

- 3.6 - Ovid describes a river preventing him from seeing his lover. He compares it to other rivers in Graeco-Roman mythology, and their associated myths such as the Inachos and the Asopus.

- 3.7 - Ovid complains of his erectile dysfunction.

- 3.8 - Ovid laments over his lover's avarice, complaining that poetry and the arts are now worth less than gold.

- 3.9 - An elegy for the dead Tibullus.

- 3.10 - The Festival of Ceres prevents Ovid from making love to his mistress. Characteristically of Ovid, he complains he should not be held responsible for the gods' mistakes.

- 3.11a - Ovid has freed himself from the shackles of his lover. He is ashamed of what he did for her and his embarrassment in standing outside her door. She exploited him, therefore he has ended it.

- 3.11b - The poet is conflicted. He loves and lusts after his lover but acknowledges her evil deeds and betrayals. He wishes she were less attractive so he can more easily escape her grasp.

- 3.12 - Ovid laments that his poetry has attracted others to his lover. He questions why his descriptions of Corinna were interpreted so literally, when poets sing of stories of fiction or, at least, embellished fact. He stresses the importance of this poetic licence.

- 3.13 - Ovid describes the Festival of Juno, which is taking place in the town of his wife's birth, Falsica (Falerii), and its origins. He finishes the poem hoping Juno will favour both him and the townspeople.

- 3.14 - Ovid instructs his partner to not tell him about her affairs. Instead, although he knows he cannot keep her to himself, he wants her to deceive him so that he can pretend not to notice.

- 3.15 - Ovid bids farewell to love elegy.

Style[edit]

Love Elegy[edit]

Ovid's Amores are firmly set in the genre of love elegy. The elegiac couplet was used first by the Greeks, originally for funeral epigrams, but it came to be associated with erotic poetry.[14] Love elegy as a genre was fashionable in Augustan times.[13]

The term ‘elegiac” refers to the meter of the poem. Elegiac meter is made up of two lines, or a couplet, the first of which is hexameter and the second pentameter.[15] Ovid often inserts a break between the words of the third foot in the hexameter line, otherwise known as a strong caesura.[16] To reflect the artistic contrast between the different meters, Ovid also ends the pentameter line in an “’iambic’ disyllable word.”[15]

Familiar themes include:

- Poem featuring the poet locked out of his mistress's door.

- Comparisons between the poet's life of leisure and respectable Roman careers, such as farming, politics, or the military.

It has been regularly praised for adapting and improving on these older models with humor.

Narrative Structure[edit]

Scholars have also noted the argumentative nature that Ovid’s love elegy follows. While Ovid has been accused by some critics to be long-winded and guilty of making the same point numerous times, others have noted the careful attention Ovid gives to the flow of his argument. Ovid usually starts a poem by presenting a thesis, then offers supporting evidence that gives rise to a theme near the end of the poem.[17] The final couplet in poem often function as a “punch-line” conclusion, not only summarizing the poem, but also delivering the key thematic idea.[18] One example of Ovid’s “argumentative” structure can be found in II.4, where Ovid begins by stating that his weakness is a love for women. He then offers supporting evidence through his analysis of different kinds of beauty, before ending with a summary of his thesis in the final couplet.[17] This logical flow usually connects one thought to next, and one poem to next, suggesting that Ovid was particularly concerned with the overall shape of his argument and how each part fit into his overall narrative.[18]

Themes[edit]

Love and War[edit]

One of the prominent themes and metaphoric comparisons in Amores is that love is war.[19] This theme likely stems from the centrality of the military in Roman life and culture, and the popular belief that the military and its pursuits were of such high value that the subject lent itself well to poetic commemoration.[19]

While Amores is about love, Ovid employs the use of military imagery to describe his pursuits of lovers.[19] One example of this in I.9, where Ovid compares the qualities of a soldier to the qualities of a lover.[20] Here both soldiers and lovers share many of the same qualities such as, keeping guard, enduring long journeys and hardships, spying on the enemy, conquering cities like a lover’s door, and using tactics like the surprise attack to win.[20] This comparison not only supports the thematic metaphor that love is war, but that to be triumphant in both requires the same traits and methods.[21] Another place where this metaphor is exemplified when Ovid breaks down the heavily guarded door to reach his lover Corinna in II.12. The siege of the door largely mirrors that of military victory.[22]

Another way this theme appears is through Ovid’s service as a soldier for Cupid.[23] The metaphor of Ovid as a soldier also suggests that Ovid lost to the conquering Cupid, and now must use his poetic ability to serve Cupid’s command.[23] Cupid as a commander and Ovid as the dutiful soldier appears throughout Amores. This relationship begins to develop in I.1, where Cupid alters the form of the poem and Ovid follows his command.[23] Ovid then goes “to war in the service of Cupid to win his mistress."[23] At the end of Amores in III.15, Ovid finally asks Cupid to terminate his service by removing Cupid’s flag from his heart.[23]

The opening of Amores and the set up of the structure also suggests a connection between love and war, and the form of the epic, traditionally associated with the subject of war, is transformed into the form of love elegy. Amores I.1 begins with the same word as the Aeneid, "Arma" (an intentional comparison to the epic genre, which Ovid later mocks), as the poet describes his original intention: to write an epic poem in dactylic hexameter, "with material suiting the meter" (line 2), that is, war. However, Cupid "steals one (metrical) foot" (unum suripuisse pedem, I.1 ln 4), turning it into elegiac couplets, the meter of love poetry.

Ovid returns to the theme of war several times throughout the Amores, especially in poem nine of Book I, an extended metaphor comparing soldiers and lovers (Militat omnis amans, "every lover is a soldier" I.9 ln 1).

Humor, Playfulness, and Sincerity[edit]

Ovid's love elegies stand apart from others in the genre by his use of humor. Ovid’s playfulness stems from making fun of both the poetic tradition of the elegy and the conventions of his poetic ancestors.[24][25] [26][27] While his predecessors and contemporaries took the love in the poetry rather seriously, Ovid spends much of his time playfully mocking their earnest pursuits. For example, women are depicted as most beautiful when they appear in their natural state according to the poems of Propertius and Tibullus.[27] Yet, Ovid playfully mocks this idea in I.14, when he criticizes Corinna for dying her hair, taking it even one step further when he reveals that a potion eventually caused her hair to fall out altogether.[27]

Ovid’s ironic humor has led scholars to project the idea that Amores functions kind of playful game, both in the context of its relationship with other poetic works, and in the thematic context of love as well.[28] The theme of love as a playful, humorous game is developed though the flirtatious and lighthearted romance described.[29] Ovid’s witty humor undermines the idea that the relationships with the women in the poems are anything lasting or that Ovid has any deep emotion attachment to the relationships.[29] His dramatizations of Corinna are one example that Ovid is perhaps more interested in poking fun at love than being truly moved by it.[29] For instance, in II.2 as Ovid faces Corinna leaving by ship, and he dramatically appeals to the Gods for her safety. This is best understood through the lens of humor and Ovid’s playfulness, as to take it seriously would make the “fifty-six allusive lines…[look] absurdly pretentious if he meant a word of them.”[30]

Due to the humor and the irony in the piece, some scholars have come question the sincerity of Amores.[26] Other scholars through find sincerity in the humor, knowing that Ovid is playing a game based on rhetorical emphasis placed on Latin, and various styles poets and people adapted in Roman culture.[26]

Use of allusions[edit]

The poems contain many allusions to other works of literature beyond love elegy.

The poet and his immortality[edit]

Poems 1.1 and 1.15 in particular both concern the way poetry makes the poet immortal, while one of his offers to a lover in 1.3 is that their names will be joined in poetry and famous forever

Influence and reception[edit]

Other Roman authors[edit]

Ovid's popularity has remained strong to the present day. After his banishment in 8 AD, Augustus ordered Ovid's works removed from libraries and destroyed, but that seems to have had little effect on his popularity. He was always "among the most widely read and imitated of Latin poets.[31] Examples of Roman authors who followed Ovid include Martial, Lucan, and Statius.[32]

Post-classical era[edit]

The majority of Latin works have been lost, with very few texts rediscovered after the Dark Ages and preserved to the present day. However in the case of Amores, there are so many manuscript copies from the 12th and 13th centuries that many are “textually worthless”, copying too closely from one another, and containing mistakes caused by familiarity.[33] Theodulph of Orleans lists Ovid with Virgil among other favourite Christian writers, while Nigellus compared Ovid's exile to the banishment of St. John, and imprisonment of Saint Peter.[34] Later in the 11th century, Ovid was the favourite poet of Abbot (and later Bishop) Baudry, who wrote imitation elegies to a nun - albeit about Platonic love.[35] Others used his poems to demonstrate allegories or moral lessons, such as the 1340 Ovid Moralisé which was translated with extensive commentary on the supposed moral meaning of the Amores.[36] Wilkinson also credits Ovid with directly contributing around 200 lines to the classic courtly love tale Roman de la Rose.[37]

Christopher Marlowe wrote a famous verse translation in English.

Footnotes[edit]

- ^ Jestin, Charbra Adams; Katz, Phyllis B., eds. (2000). Ovid: Amores, Metamorphoses Selections, 2nd Edition: Amores, Metamorphoses : Selections. Bolchazy-Carducci Publishers. p. xix. ISBN 1610410424.

- ^ Inglehart, Jennifer; Radice, Katharine, eds. (2014). Ovid: Amores III, a Selection: 2, 4, 5, 14. A&C Black. p. 9. ISBN 978-1472502926.

- ^ Amores. Translated by Bishop, Tom. Taylor & Francis. 2003. p. xiii. ISBN 0415967414.

Critics have repeatedly felt that the poems lack sincerity [...]

- ^ Boyd, Barbara Weiden (1997). Ovid's Literary Loves: Influence and Innovation in the Amores. University of Michigan Press. p. 4. ISBN 0472107593.

- ^ a b c Knox, Peter; McKeown, J.C. (2013). The Oxford Anthology of Roman Literature. New York, NY: Oxford University Press. p. 258. ISBN 9780195395167.

- ^ a b Knox, Peter; McKeown, J.C. (2013). The Oxford Anthology of Roman Literature. New York, NY: Oxford University Press. p. 260. ISBN 9780195395167.

- ^ a b Knox, Peter; McKeown, J.C. (2013). The Oxford Anthology of Roman Literature. New York, NY: Oxford University Press. p. 259. ISBN 9780195395167.

- ^ a b Martin, Thomas (2012). Ancient Rome, From Romulus to Justinian. Yale University Press. p. 109. ISBN 9780300198317.

- ^ Martin, Thomas (2012). Ancient Rome, From Romulus to Justinian. Yale University Press. p. 110. ISBN 9780300198317.

- ^ Martin, Thomas (2012). Ancient Rome, From Romulus to Justinian. Yale University Press. pp. 177–123. ISBN 9780300198317.

- ^ Martin, Thomas (2012). Ancient Rome, From Romulus to Justinian. Yale University Press. p. 125. ISBN 9780300198317.

- ^ Knox, Peter; McKeown, J.C. (2013). The Oxford Anthology of Roman Literature. New York, NY: Oxford University Press. p. 259. ISBN 9780195395167.

- ^ a b Roman, L., & Roman, M. (2010). Encyclopedia of Greek and Roman mythology., p. 57, at Google Books

- ^ William Turpin, "The Amores". Dickinson College Commentaries

- ^ a b Bishop, Tom (2003). Ovid 'Amores'. Manchester, England: Carcanet Press Limited. pp. xiv. ISBN 185754689X.

- ^ Turpin, William (2016). Ovid, Amores Book 1. Cambridge, UK: Open Book Publishers. p. 15. ISBN 978-1-78374-162-5.

- ^ a b Thomas, Elizabeth (October 1964). "Variations on a Military Theme in Ovid's 'Amores'". Greece & Rome. 11 (2). Cambridge University Press: 157. doi:10.1017/S0017383500014169. JSTOR 642238. S2CID 162354950 – via JSTOR.

- ^ a b Turpin, William (2016). Ovid, Amores Book 1. Cambridge, UK: Open Book Publishers. p. 5. ISBN 978-1-78374-162-5.

- ^ a b c Thomas, Elizabeth (October 1964). "Variations on a Military Theme in Ovid's 'Amores'". Greece & Rome. 11 (2). Cambridge University Press: 151–165. doi:10.1017/S0017383500014169. JSTOR 642238. S2CID 162354950 – via JSTOR.

- ^ a b Thomas, Elizabeth (1964). "Variations on a Military Theme in Ovid's 'Amores'". Greece & Rome. 11 (2). Cambridge University Press: 158–159. doi:10.1017/S0017383500014169. JSTOR 642238. S2CID 162354950 – via JSTOR.

- ^ Thomas, Elizabeth (October 1964). "Variations on a Military Theme in Ovid's 'Amores'". Greece & Rome. 11 (2). Cambridge University Press: 159–160. doi:10.1017/S0017383500014169. JSTOR 642238. S2CID 162354950 – via JSTOR.

- ^ Thomas, Elizabeth (October 1964). "Variations on a Military Theme in Ovid's 'Amores'". Greece & Rome. 11 (2). Cambridge University Press: 161. doi:10.1017/S0017383500014169. JSTOR 642238. S2CID 162354950 – via JSTOR.

- ^ a b c d e Elizabeth, Thomas (October 1964). "Variations on a Military Theme in Ovid's 'Amores'". Greece & Rome. 11 (2). Cambridge University Press: 155. JSTOR 642238 – via JSTOR.

- ^ Turpin, William (2016). Ovid, Amores (Book 1). Open Book Publishers. p. 4. ISBN 978-1-78374-162-5.

- ^ Thomas, Elizabeth (October 1964). "Variations on a Military Theme in Ovid's 'Amores'". Greece & Rome. 11 (2). Cambridge University Press: 154. doi:10.1017/S0017383500014169. JSTOR 642238. S2CID 162354950 – via JSTOR.

- ^ a b c Bishop, Tom (2003). Ovid Amores. Manchester, England: Carcanet Press Limited. pp. xii. ISBN 185754689X.

- ^ a b c Ruden, Sarah (2014). "Introduction" in Ovid's Erotic Poems: Amores and Ars Amatoria. Philadelphia, Pennsylvania: University of Pennsylvania Press. p. 15. ISBN 978-0-8122-4625-4.

- ^ Bishop, Tom (2003). Ovid, Amores. Manchester, England: Carcanet Press Limited. pp. xiii. ISBN 185754689X.

- ^ a b c Ruden, Sarah; Krisak, Len (2014). "Introduction" in Ovid's Erotic Poems: Amores and Ars Amatoria. Philadelphia, Pennsylvania: University of Pennsylvania Press. p. 1. ISBN 978-0-8122-4625-4.

- ^ Ruden, Sarah; Krisak, Len (2014). "Introduction" in Ovid's Erotic Poems: Amores and Ars Amatoria. Philadelphia, Pennsylvania: University of Pennsylvania Press. p. 2. ISBN 978-0-8122-4625-4.

- ^ Reynolds, L.D.; Wilson, N.G. Texts and Transmission: A Survey of the Latin Classics. 1984. p. 257

- ^ Robathan, p. 191

- ^ Propertius, T. Benediktson. Modernist Poet of Antiquity. 1989: SIU Press 1989. pp. 2-3

- ^ Robathan, D. "Ovid in the Middle Ages", in Binns, J. W. (Ed.) Ovid. London, 1973: Routledge & K. Paul. ISBN 9780710076397 p. 192

- ^ Wilkinson, L.P. Ovid Recalled. 1955: Duckworth. p. 377

- ^ Wilkinson, p. 384

- ^ Wilkinson, p. 392

Bibliography[edit]

- Editions and commentaries

- William Turpin (2016). Ovid, Amores (Book 1). Dickinson College commentaries. Open Book Publishers. doi:10.11647/obp.0067. ISBN 978-1-78374-162-5.

- Ovidius Naso, Publius (2023). Amores. volume 4.i: A commentary on book three: elegies 1 to 8 / J.C. McKeown and R.J. Littlewood. Liverpool: Francis Cairns. ISBN 9780995461239.

- Studies

- L.D. Reynolds; N.G.Wilson Texts and Transmission: A Survey of the Latin Classics (Oxford: Clarendon Press, 1984)

- D. Robathan "Ovid in the Middle Ages" in Binns, Ovid (London 1973)

- L.P Wilkinson, Ovid Recalled (Duckworth 1955)

External links[edit]

- Latin text

- Marlowe's translation

- Wikisource translation of Amores, [1]

- David Drake's translation